MCP Deployment Guide Q4`18 documentation

MCP Deployment Guide¶

This guide provides instructions for deploying Mirantis Cloud Platform (MCP) and is intended for Mirantis deployment and QA engineers.

Preface¶

This documentation provides information on how to use Mirantis products to deploy cloud environments. The information is for reference purposes and is subject to change.

Intended audience¶

This documentation is intended for deployment engineers, system administrators, and developers; it assumes that the reader is already familiar with network and cloud concepts.

Documentation history¶

The following table lists the released revisions of this documentation:

| Revision date | Description |

|---|---|

| February 8, 2019 | Q4`18 GA |

Introduction¶

MCP enables you to deploy and manage cloud platforms and their dependencies. These include OpenStack and Kubernetes based clusters.

The deployment can be performed automatically through MCP DriveTrain or using the manual deployment procedures.

The MCP DriveTrain deployment approach is based on the bootstrap automation of the Salt Master node that contains MAAS hardware nodes provisioner as well as on the automation of an MCP cluster deployment using the Jenkins pipelines. This approach significantly reduces your time and eliminates possible human errors.

The manual deployment approach provides the ability to deploy all the components of the cloud solution in a very granular fashion.

The guide also covers the deployment procedures for additional MCP components including OpenContrail, Ceph, StackLight, NFV features.

See also

Plan the deployment¶

The configuration of your MCP installation depends on the individual requirements that should be met by the cloud environments.

The detailed plan of any MCP deployment is determined on a per-cloud basis. For the MCP reference architecture and design overview, see: MCP Reference Architecture: Plan an OpenStack environment or MCP Reference Architecture: Plan a Kubernetes cluster depending on the type of your deployment.

Caution

Kubernetes support termination notice

Starting with the MCP 2019.2.5 update, the Kubernetes component is no longer supported as a part of the MCP product. This implies that Kubernetes is not tested and not shipped as an MCP component. Although the Kubernetes Salt formula is available in the community driven SaltStack formulas ecosystem, Mirantis takes no responsibility for its maintenance.

Customers looking for a Kubernetes distribution and Kubernetes lifecycle management tools are encouraged to evaluate the Mirantis Kubernetes-as-a-Service (KaaS) and Docker Enterprise products.

At the same time, MCP provides a flexible reduced prebuilt mirror image that you can customize depending on the needs of your MCP deployment after the initial bootstrap is performed. The usage of the prebuilt mirror image is essential in case of an offline MCP deployment scenario. The prebuilt mirror image contains the Debian package mirror (Aptly or flat deb repositories), Docker images mirror (Registry), Git repositories mirror, and mirror of the Mirantis Ubuntu VM cloud images (VCP). This guide includes the steps required for the case with the additional prebuilt VM deployment on the Foundation node.

Prepare for the deployment¶

Create a project repository¶

An MCP cluster deployment configuration is stored in a Git repository created on a per-customer basis. This section instructs you on how to manually create and prepare your project repository for an MCP deployment.

Before you start this procedure, create a Git repository in your version control system, such as GitHub.

To create a project repository manually:

Log in to any computer.

Create an empty directory and change to that directory. In the example below, it is

mcpdoc.Initialize your project repository:

git initExample of system response:

Initialized empty Git repository in /Users/crh/Dev/mcpdoc/.git/

Add your repository to the directory you have created:

git remote add origin <YOUR-GIT-REPO-URL>

Verify that Git and your local repository are set up correctly by creating and pushing a test file to your project repository. Run the following example commands:

Note

The example commands below require the Git and GitHub credentials to be created and configured for your project repository.

git remote add origin https://github.com/example_account/mcpdoc.git git config --local user.email "example@example.com" git config --local user.name "example_gituser" git config -l echo "#. mcpdoc" >> README.md git add README.md git commit -m "first commit" git push -u origin master

Create the following directories for your deployment metadata model:

mkdir -p classes/cluster mkdir nodes

Add the Reclass variable to your bash profile by verifying your current directory using pwd and adding the string that exports the Reclass variable with the output value of the pwd command:

pwd vim ~/.bash_profile export RECLASS_REPO=<PATH_TO_YOUR_DEV_DIRECTORY>

Example of system response:

/Users/crh/Dev/mcpdoc/ "~/.bash_profile" 13L, 450C export RECLASS_REPO="/Users/crh/Dev/mcpdoc/"

Log out and log back in.

Verify that your

~/.bash_profileis sourced:echo $RECLASS_REPO

The command must show the value of your

RECLASS_REPOvariable.Example of system response:

/Users/crh/Dev/mcpdoc/

Add the Mirantis Reclass module to your repository as a submodule:

git submodule add https://github.com/Mirantis/reclass-system-salt-model ./classes/system/

Example of system response:

Cloning into '<PATH_TO_YOUR_DEV_DIRECTORY>/classes/system'... remote: Counting objects: 8923, done. remote: Compressing objects: 100% (214/214), done. remote: Total 8923 (delta 126), reused 229 (delta 82), pack-reused 8613 Receiving objects: 100% (8923/8923), 1.15 MiB | 826.00 KiB/s, done. Resolving deltas: 100% (4482/4482), done. Checking connectivity... done.

Update the submodule:

git submodule sync git submodule update --init --recursive --remote

Add your changes to a new commit:

git add -A

Commit your changes:

git commitAdd your commit message.

Example of system response:

[master (root-commit) 9466ada] Initial Commit 2 files changed, 4 insertions(+) create mode 100644 .gitmodules create mode 160000 classes/system

Push your changes:

git pushProceed to Create a deployment metadata model.

Create a deployment metadata model¶

In a Reclass metadata infrastructural model, the data is stored as a set of several layers of objects, where objects of a higher layer are combined with objects of a lower layer, that allows for as flexible configuration as required.

The MCP metadata model has the following levels:

- Service level includes metadata fragments for individual services that are stored in Salt formulas and can be reused in multiple contexts.

- System level includes sets of services combined in a such way that the installation of these services results in a ready-to-use system.

- Cluster level is a set of models that combine already created system objects into different solutions. The cluster module settings override any settings of service and system levels and are specific for each deployment.

The model layers are firmly isolated from each other. They can be aggregated on a south-north direction using service interface agreements for objects on the same level. Such approach allows reusing of the already created objects both on service and system levels.

This section describes how to generate the cluster level metadata model for your MCP cluster deployment using the Model Designer web UI. The tool used to generate the model is Cookiecutter, a command-line utility that creates projects from templates.

While generating a metadata model, you can enable automated encryption

of all secrets for the Salt Master node .iso file.

Note

The Model Designer web UI is only available within Mirantis. The Mirantis deployment engineers can access the Model Designer web UI using their Mirantis corporate username and password.

The workflow of a model creation includes the following stages:

Defining the model through the Model Designer web UI.

Optional. Tracking the execution of the model creation pipeline in the Jenkins web UI.

Obtaining the generated model to your email address or getting it published to the project repository directly.

Note

If you prefer publishing to the project repository, verify that the dedicated repository is configured correctly and Jenkins can access it. See Create a project repository for details.

As a result, you get a generated deployment model and can customize it to fit specific use-cases. Otherwise, you can proceed with the base infrastructure deployment.

Enable all secrets encryption¶

The Model Designer UI supports passing a private key

to enable automated encryption of secrets.yml during the Salt Master node

.iso file generation.

To enable all secrets encryption in the Model Designer UI:

Generate a private PGP key locally. For example:

mkdir -p ~/mcp-temp-gpg-key ; cd ~/mcp-temp-gpg-key cd cat <<EOF > gpg-batch.txt Key-Type: 1 Key-Length: 4096 Expire-Date: 0 Name-Real: gpg-demo.com Name-Email: saltmasterdemo@example.com EOF export GNUPGHOME="$(pwd)/gpghome" ; mkdir -p gpghome ; chmod 0700 gpghome gpg --gen-key --batch < gpg-batch.txt gpg --export-secret-key -a saltmasterdemo@example.com > gpgkey.asc gpg --list-secret-keys

Copy the generated private PGP key:

cat gpgkey.ascExample of system response:

-----BEGIN PGP PRIVATE KEY BLOCK----- Version: GnuPG v1 lQcYBFyKM7kBEADGU6P/Lp9YRMY/vLw7VOF5Sox1rnu2lz6YqnNQ2J+ZHVlPA9R ........

Proceed with the metadata model generation as described in Define the deployment model. While generating the metadata model, enable the following parameters:

- In General -> Services section, select Secrets Encryption Enabled

- In Infra -> Salt Master section, paste the private key to the Secrets Encryption Private Key field

Proceed to the metadata model generation.

Define the deployment model¶

This section instructs you on how to define the cluster level metadata model through the web UI using Cookiecutter. Eventually, you will obtain a generic deployment configuration that can be overriden afterwards.

Note

The Model Designer web UI is only available within Mirantis. The Mirantis deployment engineers can access the Model Designer web UI using their Mirantis corporate username and password.

Note

Currently, Cookiecutter can generate models with basic configurations. You may need to manually customize your model after generation to meet specific requirements of your deployment, for example, four interfaces bonding.

To define the deployment model:

- Log in to the web UI.

- Go to Integration dashboard > Models > Model Designer.

- Click Create Model. The Create Model page opens.

- Configure your model by selecting a corresponding tab and editing as

required:

- Configure General deployment parameters. Click Next.

- Configure Infrastructure related parameters. Click Next.

- Configure Product related parameters. Click Next.

- Verify the model on the Output summary tab. Edit if required.

- Click Confirm to trigger the

Generate reclass cluster separated-products-autoJenkins pipeline. If required, you can track the success of the pipeline execution in the Jenkins web UI.

If you selected the Send to e-mail address publication option on the General parameters tab, you will receive the generated model to the e-mail address you specified in the Publication options > Email address field on the Infrastructure parameters tab. Otherwise, the model will automatically be pushed to your project repository.

General deployment parameters¶

The tables in this section outline the general configuration parameters that you can define for your deployment model through the Model Designer web UI. Consult the Define the deployment model section for the complete procedure.

The General deployment parameters wizard includes the following sections:

- Basic deployment parameters cover basic deployment parameters

- Services deployment parameters define the platform you need to generate the model for

- Networking deployment parameters cover the generic networking setup for a dedicated management interface and two interfaces for the workload. The two interfaces for the workload are in bond and have tagged sub-interfaces for the Control plane (Control network/VLAN) and Data plane (Tenant network/VLAN) traffic. The PXE interface is not managed and is leaved to default DHCP from installation. Setups for the NFV scenarios are not covered and require manual configuration.

| Parameter | Default JSON output | Description |

|---|---|---|

| Cluster name | cluster_name: deployment_name |

The name of the cluster that will be used as cluster/<cluster_name>/

in the project directory structure |

| Cluster domain | cluster_domain: deploy-name.local |

The name of the domain that will be used as part of the cluster FQDN |

| Public host | public_host: ${_param:openstack_proxy_address} |

The name or IP address of the public endpoint for the deployment |

| Reclass repository | reclass_repository: https://github.com/Mirantis/mk-lab-salt-model.git |

The URL to your project Git repository containing your models |

| Cookiecutter template URL | cookiecutter_template_url: git@github.com:Mirantis/mk2x-cookiecutter-reclass-model.git |

The URL to the Cookiecutter template repository |

| Cookiecutter template branch | cookiecutter_template_branch: master |

The branch of the Cookiecutter template repository to use, master

by default. Use refs/tags/<mcp_version> to generate the model that

corresponds to a specific MCP release version. For example, 2017.12.

Other possible values include stable and testing. |

| Shared Reclass URL | shared_reclass_url: ssh://mcp-jenkins@gerrit.mcp.mirantis.net:29418/salt-models/reclass-system.git |

The URL to the shared system model to be used as a Git submodule for the MCP cluster |

| MCP version | mcp_version: stable |

Version of MCP to use, stable by default. Enter the release version

number, for example, 2017.12. Other possible values are:

nightly, testing. For nightly, use

cookiecutter_template_branch: master. |

| Cookiecutter template credentials | cookiecutter_template_credentials: gerrit |

Credentials to Gerrit to fetch the Cookiecutter templates repository. The parameter is used by Jenkins |

| Deployment type | deployment_type: physical |

The supported deployment types include:

|

| Publication method | publication_method: email |

The method to obtain the template. Available options include:

|

| Parameter | Default JSON output | Description |

|---|---|---|

| Platform |

|

The platform to generate the model for:

|

| StackLight enabled | stacklight_enabled: 'True' |

Enables a StackLight LMA sub-cluster. |

| Gainsight service enabled | gainsight_service_enabled: 'False' |

Enables support for the Salesforce/Gainsight service |

| Salesforce notifications enabled | sf_notifications_enabled: 'False' |

Enables sending of Alertmanager notifications to Salesforce |

| Ceph enabled | ceph_enabled: 'True' |

Enables a Ceph sub-cluster. |

| CI/CD enabled | cicd_enabled: 'True' |

Enables a CI/CD sub-cluster. |

| OSS enabled | oss_enabled: 'True' |

Enables an OSS sub-cluster. |

| Benchmark node enabled | bmk_enabled: 'False' |

Enables a benchmark node. False, by default. |

| Barbican enabled | barbican_enabled: 'False' |

Enables the Barbican service |

| Backend for Barbican | barbican_backend: dogtag |

The backend for Barbican |

| Parameter | Default JSON output | Description |

|---|---|---|

| DNS Server 01 | dns_server01: 8.8.8.8 |

The IP address of the dns01 server |

| DNS Server 02 | dns_server02: 1.1.1.1 |

The IP address of the dns02 server |

| Deploy network subnet | deploy_network_subnet: 10.0.0.0/24 |

The IP address of the deploy network with the network mask |

| Deploy network gateway | deploy_network_gateway: 10.0.0.1 |

The IP gateway address of the deploy network |

| Control network subnet | control_network_subnet: 10.0.1.0/24 |

The IP address of the control network with the network mask |

| Tenant network subnet | tenant_network_subnet: 10.0.2.0/24 |

The IP address of the tenant network with the network mask |

| Tenant network gateway | tenant_network_gateway: 10.0.2.1 |

The IP gateway address of the tenant network |

| Control VLAN | control_vlan: '10' |

The Control plane VLAN ID |

| Tenant VLAN | tenant_vlan: '20' |

The Data plane VLAN ID |

| NTP servers Added since 2019.2.6 update | 0.pool.ntp.org,1.pool.ntp.org |

The comma-separated list of Network Time Protocol (NTP)

servers. You can also configure multiple NTP servers as required,

for example, server1.ntp.org,server2.ntp.org,server3.ntp.org. |

Publish the deployment model to a project repository¶

If you selected the option to receive the generated deployment model to your email address and customized it as required, you need to apply the model to the project repository.

To publish the metadata model, push the changes to the project Git repository:

git add *

git commit –m "Initial commit"

git pull -r

git push --set-upstream origin master

See also

Deploy MCP DriveTrain¶

To reduce the deployment time and eliminate possible human errors, Mirantis recommends that you use the semi-automated approach to the MCP DriveTrain deployment as described in this section.

Caution

The execution of the CLI commands used in the MCP Deployment

Guide requires root privileges. Therefore, unless explicitly

stated otherwise, run the commands as a root user or use

sudo.

The deployment of MCP DriveTrain bases on the bootstrap automation of the Salt Master node. On a Reclass model creation, you receive the configuration drives by the email that you specified during the deployment model generation.

Depending on the deployment type, you receive the following configuration drives:

- For an online and offline deployment, the configuration drive for the

cfg01VM that is used in cloud-init to set up a virtual machine with Salt Master, MAAS provisioner, Jenkins server, and local Git server installed on it. - For an offline deployment, the configuration drive for the APT VM that is used in cloud-init to set up a virtual machine with all required repositories mirrors.

| # | Description |

|---|---|

| 1 | Manually deploy and configure the Foundation node as described in Prerequisites for MCP DriveTrain deployment. |

| 2 | Create the deployment model using the Model Designer web UI as described in Create a deployment metadata model. |

| 3 | Obtain the pre-built ISO configuration drive(s) with the Reclass deployment metadata model to you email. If required, customize and regenerate the configuration drives as described in Generate configuration drives manually. |

| 4 | Bootstrap the APT node. Optional, for an offline deployment only. For details, see: Deploy the APT node. |

| 5 | Bootstrap the Salt Master node that contains MAAS provisioner, Jenkins server, and local Git server. For details, see: Deploy the Salt Master node. |

| 6 | Deploy the remaining bare metal servers using the MAAS provisioner. For details, see: Provision physical nodes using MAAS and Deploy physical nodes. |

| 7 | Deploy MCP CI/CD using Jenkins as described in Deploy CI/CD. |

Prerequisites for MCP DriveTrain deployment¶

Before you proceed with the actual deployment, verify that you have performed the following steps:

Deploy the Foundation physical node using one of the initial versions of Ubuntu Xenial, for example, 16.04.1.

Use any standalone hardware node where you can run a KVM-based

day01virtual machine with an access to the deploy/control network. The Foundation node will host the Salt Master node that also includes the MAAS provisioner by default. For the offline case deployment, the Foundation node will also host the mirror VM.Depending on your case, proceed with one of the following options:

If you do not have a deployment metadata model:

Create a model using the Model Designer UI as described in Create a deployment metadata model.

Note

For an offline deployment, select the Offline deployment and Local repositories options under the Repositories section on the Infrastructure parameters tab.

Customize the obtained configuration drives as described in Generate configuration drives manually. For example, enable custom user access.

If you use an already existing model that does not have configuration drives, or you want to generate updated configuration drives, proceed with Generate configuration drives manually.

Configure the following bridges on the Foundation node:

br-mgmfor the management network andbr-ctlfor the control network.Log in to the Foundation node through IPMI.

Note

If the IPMI network is not reachable from the management or control network, add the

br-ipmibridge for the IPMI network or any other network that is routed to the IPMI network.Create PXE bridges to provision network on the foundation node:

brctl addbr br-mgm brctl addbr br-ctl

Install the

br-ctlutility:apt install bridge-utils

Add the bridges definition for

br-mgmandbr-ctlto/etc/network/interfaces. Use definitions from your deployment metadata model.Example:

auto br-mgm iface br-mgm inet static address 172.17.17.200 netmask 255.255.255.192 bridge_ports bond0

Restart networking from the IPMI console to bring the bonds up.

Verify that the foundation node bridges are up by checking the output of the ip a show command:

ip a show br-ctl

Example of system response:

8: br-ctl: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000 link/ether 00:1b:21:93:c7:c8 brd ff:ff:ff:ff:ff:ff inet 172.17.45.241/24 brd 172.17.45.255 scope global br-ctl valid_lft forever preferred_lft forever inet6 fe80::21b:21ff:fe93:c7c8/64 scope link valid_lft forever preferred_lft forever

Depending on your case, proceed with one of the following options:

- If you perform an online deployment, proceed to Deploy the Salt Master node.

- If you perform the offline deployment or online deployment with local mirrors, proceed to Deploy the APT node.

Deploy the APT node¶

MCP enables you to deploy the whole MCP cluster without access to the

Internet. On creating the metadata model, along with the configuration drive

for the cfg01 VM, you will obtain a preconfigured QCOW2 image that will

contain packages, Docker images, operating system images, Git repositories,

and other software required specifically for the offline deployment.

This section describes how to deploy the apt01 VM using the

prebuilt configuration drive.

Warning

Perform the procedure below only in case of an offline deployment or when using a local mirror from the prebuilt image.

To deploy the APT node:

Verify that you completed steps described in Prerequisites for MCP DriveTrain deployment.

Log in to the Foundation node.

Note

Root privileges are required for following steps. Execute the commands as a root user or use

sudo.Download the latest version of the prebuilt

http://images.mirantis.com/mcp-offline-image-<BUILD-ID>.qcow2image for theaptnode from http://images.mirantis.com.In the

/var/lib/libvirt/images/directory, create anapt01/subdirectory where the offline mirror image will be stored:Note

You can create and use a different subdirectory in

/var/lib/libvirt/images/. If that is the case, verify that you specify the correct directory for theVM_*DISKvariables described in next steps.mkdir -p /var/lib/libvirt/images/apt01/

Save the image on the Foundation node as

/var/lib/libvirt/images/apt01/system.qcow2.Copy the configuration ISO drive for the APT VM provided with the metadata model for the offline image to, for example,

/var/lib/libvirt/images/apt01/.Caution

By default, the prebuilt image does not have a possibility to log in to.

Note

If you are using an already existing model that does not have configuration drives, or you want to generate updated configuration drives, for example, with an unlocked

rootlogin for debugging purposes, proceed with Generate configuration drives manually.cp /path/to/prepared-drive/apt01-config.iso /var/lib/libvirt/images/apt01/apt01-config.iso

Deploy the APT node:

Download the shell script from GitHub:

export MCP_VERSION="master" wget https://raw.githubusercontent.com/Mirantis/mcp-common-scripts/${MCP_VERSION}/predefine-vm/define-vm.sh

Make the script executable, export the required variables:

chmod +x define-vm.sh export VM_NAME="apt01.<CLUSTER_DOMAIN>" export VM_SOURCE_DISK="/var/lib/libvirt/images/apt01/system.qcow2" export VM_CONFIG_DISK="/var/lib/libvirt/images/apt01/apt01-config.iso"

The

CLUSTER_DOMAINvalue is the cluster domain name used for the model. See Basic deployment parameters for details.Note

You may add other optional variables that have default values and change them depending on your deployment configuration. These variables include:

VM_MGM_BRIDGE_NAME="br-mgm"VM_CTL_BRIDGE_NAME="br-ctl"VM_MEM_KB="12589056"VM_CPUS="4"

The recommended

VM_MEM_KBfor the Salt Master node is12589056(or more depending on your cluster size) that is 12 GB of RAM. For large clusters, you should also increaseVM_CPUS.The recommended

VM_MEM_KBfor the local mirror node is8388608(or more) that is 8 GB of RAM.The

br-mgmandbr-ctlvalues are the names of the Linux bridges. See Prerequisites for MCP DriveTrain deployment for details. Custom names can be passed to a VM definition using theVM_MGM_BRIDGE_NAMEandVM_CTL_BRIDGE_NAMEvariables accordingly.Run the shell script:

./define-vm.sh

Start the

apt01VM:virsh start apt01.<CLUSTER_DOMAIN>

For MCP versions prior to the 2019.2.14 maintenance update, perform the following additional steps:

SSH to the

apt01node.Verify the certificate:

openssl x509 -checkend 1 -in /var/lib/docker/swarm/certificates/swarm-node.crt

If the certificate has expired, restart Docker Swarm to regenerate it:

systemctl stop docker || true rm -rf /var/lib/docker/swarm/* systemctl restart docker sleep 5 docker ps docker swarm init --advertise-addr 127.0.0.1 sleep 5 cd /etc/docker/compose/docker/ docker stack deploy --compose-file docker-compose.yml docker sleep 5 cd /etc/docker/compose/aptly/ docker stack deploy --compose-file docker-compose.yml aptly sleep 5 docker ps

After completing the steps above, you obtain the apt01 node that contains

only the pre-built content. Now, you can proceed with

Deploy the Salt Master node. Once you deploy the Salt Master node,

you will be able to customize the content of the local mirror, as described in

Customize the prebuilt mirror node.

Deploy the Salt Master node¶

The Salt Master node acts as a central control point for the clients that are called Salt minion nodes. The minions, in their turn, connect back to the Salt Master node.

This section describes how to set up a virtual machine with Salt Master, MAAS provisioner, Jenkins server, and local Git server. The procedure is applicable to both online and offline MCP deployments.

To deploy the Salt Master node:

Log in to the Foundation node.

Note

Root privileges are required for following steps. Execute the commands as a root user or use

sudo.In case of an offline deployment, replace the content of the

/etc/apt/sources.listfile with the following lines:deb [arch=amd64] http://<local_mirror_url>/ubuntu xenial-security main universe restricted deb [arch=amd64] http://<local_mirror_url>/ubuntu xenial-updates main universe restricted deb [arch=amd64] http://<local_mirror_url>/ubuntu xenial main universe restricted

Create a directory for the VM system disk:

Note

You can create and use a different subdirectory in

/var/lib/libvirt/images/. If that is the case, verify that you specify the correct directory for theVM_*DISKvariables described in next steps.mkdir -p /var/lib/libvirt/images/cfg01/

Download the

day01image for thecfg01node:wget http://images.mirantis.com/cfg01-day01-<BUILD_ID>.qcow2 -O \ /var/lib/libvirt/images/cfg01/system.qcow2

Substitute

<BUILD_ID>with the required MCP Build ID, for example,2019.2.0.Copy the configuration ISO drive for the

cfg01VM provided with the metadata model for the offline image to, for example,/var/lib/libvirt/images/cfg01/cfg01-config.iso.Note

If you are using an already existing model that does not have configuration drives, or you want to generate updated configuration drives, for example, with an unlocked

rootlogin for debugging purposes, proceed with Generate configuration drives manually.Caution

Make sure to securely back up the configuration ISO drive image. This image contains critical information required to re-install your

cfg01node in case of storage failure, including master key for all encrypted secrets in the cluster metadata model.Failure to back up the configuration ISO image may result in loss of ability to manage MCP in certain hardware failure scenarios.

cp /path/to/prepared-drive/cfg01-config.iso /var/lib/libvirt/images/cfg01/cfg01-config.iso

Create the Salt Master VM domain definition using the example script:

Download the shell scripts from GitHub with the required MCP release version. For example:

export MCP_VERSION="2019.2.0" git clone https://github.com/Mirantis/mcp-common-scripts -b release/${MCP_VERSION}

Make the script executable and export the required variables:

cd mcp-common-scripts/predefine-vm/ export VM_NAME="cfg01.[CLUSTER_DOMAIN]" export VM_SOURCE_DISK="/var/lib/libvirt/images/cfg01/system.qcow2" export VM_CONFIG_DISK="/var/lib/libvirt/images/cfg01/cfg01-config.iso"

The

CLUSTER_DOMAINvalue is the cluster domain name used for the model. See Basic deployment parameters for details.Note

You may add other optional variables that have default values and change them depending on your deployment configuration. These variables include:

VM_MGM_BRIDGE_NAME="br-mgm"VM_CTL_BRIDGE_NAME="br-ctl"VM_MEM_KB="12589056"VM_CPUS="4"

The recommended

VM_MEM_KBfor the Salt Master node is12589056(or more depending on your cluster size) that is 12 GB of RAM. For large clusters, you should also increaseVM_CPUS.The recommended

VM_MEM_KBfor the local mirror node is8388608(or more) that is 8 GB of RAM.The

br-mgmandbr-ctlvalues are the names of the Linux bridges. See Prerequisites for MCP DriveTrain deployment for details. Custom names can be passed to a VM definition using theVM_MGM_BRIDGE_NAMEandVM_CTL_BRIDGE_NAMEvariables accordingly.Run the shell script:

./define-vm.sh

Start the Salt Master node VM:

virsh start cfg01.[CLUSTER_DOMAIN]

Log in to the Salt Master virsh console with the user name and password that you created in step 4 of the Generate configuration drives manually procedure:

virsh console cfg01.[CLUSTER_DOMAIN]

If you use local repositories, verify that

mk-pipelinesare present in/home/repo/mkandpipeline-libraryis present in/home/repo/mcp-ciaftercloud-initfinishes. If not, fix the connection to local repositories and run the/var/lib/cloud/instance/scripts/part-001script.Verify that the following states are successfully applied during the execution of

cloud-init:salt-call state.sls linux.system,linux,openssh,salt salt-call state.sls maas.cluster,maas.region,reclass

Otherwise, fix the pillar and re-apply the above states.

In case of using

kvm01as the Foundation node, perform the following steps on it:Depending on the deployment type, proceed with one of the options below:

For an online deployment, add the following

debrepository to/etc/apt/sources.list.d/mcp_saltstack.list:deb [arch=amd64] https://mirror.mirantis.com/<MCP_VERSION>/saltstack-2017.7/xenial/ xenial main

For an offline deployment or local mirrors case, in

/etc/apt/sources.list.d/mcp_saltstack.list, add the followingdebrepository:deb [arch=amd64] http://<local_mirror_url>/<MCP_VERSION>/saltstack-2017.7/xenial/ xenial main

Install the

salt-minionpackage.Modify

/etc/salt/minion.d/minion.conf:id: <kvm01_FQDN> master: <Salt_Master_IP_or_FQDN>

Restart the

salt-minionservice:service salt-minion restart

Check the output of

salt-keycommand on the Salt Master node to verify that the minion ID ofkvm01is present.

Verify the Salt infrastructure¶

Before you proceed with the deployment, validate the Reclass model and node pillars.

To verify the Salt infrastructure:

Log in to the Salt Master node.

Verify the Salt Master pillars:

reclass -n cfg01.<cluster_domain>The

cluster_domainvalue is the cluster domain name that you created while preparing your deployment metadata model. See Basic deployment parameters for details.Verify that the Salt version for the Salt minions is the same as for the Salt Master node, that is currently

2017.7:salt-call --version salt '*' test.version

If required, enable management of the offline mirror VM (

apt01) and customize the VM contents as described in Enable the management of the APT node through the Salt Master node.

Enable the management of the APT node through the Salt Master node¶

In compliance with the security best practices, MCP enables you to connect your offline mirror APT VM to the Salt Master node and manage it as any infrastructure VM on your MCP deployment.

Note

This section is only applicable for the offline deployments where

all repositories are stored on a specific VM deployed using

the MCP apt01 offline image, which is included in the MCP

release artifacts.

For the deployments managed by the MCP 2018.8.0 Build ID or later, you should not manually enable the Salt minion on the offline image VM as it is configured automatically on boot during the APT VM provisioning.

Though, if your want to enable the management of the offline image VM through the Salt Master node on an existing deployment managed by the MCP version below the 2018.8.0 Build ID, you need to perform the procedure included in this section.

To enable the Salt minion on an existing offline mirror node:

Connect to the serial console of your offline image VM, which is included in the pre-built offline APT QCOW image:

virsh console $(virsh list --all --name | grep ^apt01) --force

Log in with the user name and password that you created in step 4 of the Generate configuration drives manually procedure.

Example of system response:

Connected to domain apt01.example.local Escape character is ^]

Press Enter to drop into the root shell.

Configure the Salt minion and start it:

echo "" > /etc/salt/minion echo "master: <IP_address>" > /etc/salt/minion.d/minion.conf echo "id: <apt01.example.local>" >> /etc/salt/minion.d/minion.conf service salt-minion stop rm -f /etc/salt/pki/minion/* service salt-minion start

Quit the serial console by sending the Ctrl + ] combination.

Log in to the Salt Master node.

Verify that you have the offline mirror VM Salt minion connected to your Salt Master node:

salt-key -L | grep apt

The system response should include your offline mirror VM domain name. For example:

apt01.example.local

Verify that you can access the Salt minion from the Salt Master node:

salt apt01\* test.ping

Verify the Salt states are mapped to the offline mirror VM:

salt apt01\* state.show_top

Now, you can manage your offline mirror APT VM from the Salt Master node. At this point, the Salt Master node does not manage the offline mirror content. If you need to adjust the content of your offline mirror, refer to Customize the prebuilt mirror node.

Configure MAAS for bare metal provisioning¶

Before you proceed with provisioning of the remaining bare metal nodes, configure MAAS as described below.

To configure MAAS for bare metal provisioning:

Log in to the MAAS web UI through

http://<infra_config_deploy_address>:5240/MAASwith the following credentials:- Username:

mirantis - Password:

r00tme

- Username:

Go to the Subnets tab.

Select the fabric that is under the deploy network.

In the VLANs on this fabric area, click the VLAN under the VLAN column where the deploy network subnet is.

In the Take action drop-down menu, select Provide DHCP.

Adjust the IP range as required.

Note

The number of IP addresses should not be less than the number of the planned VCP nodes.

Click Provide DHCP to submit.

If you use local package mirrors:

Note

The following steps are required only to specify the local Ubuntu package repositories that are secured by a custom GPG key and used mainly for the offline mirror images prior the MCP version 2017.12.

- Go to Settings > Package repositories.

- Click Actions > Edit on the Ubuntu archive repository.

- Specify the GPG key of the repository in the Key field.

The key can be obtained from the

aptly_gpg_public_keyparameter in the cluster level Reclass model. - Click Save.

Provision physical nodes using MAAS¶

Physical nodes host the Virtualized Control Plane (VCP) of your Mirantis Cloud Platform deployment.

This section describes how to provision the physical nodes using the MAAS service that you have deployed on the Foundation node while deploying the Salt Master node.

The servers that you must deploy include at least:

- For OpenStack:

kvm02andkvm03infrastructure nodescmp0compute node

- For Kubernetes:

kvm02andkvm03infrastructure nodesctl01,ctl02,ctl03controller nodescmp01andcmp02compute nodes

You can provision physical nodes automatically or manually:

- An automated provisioning requires you to define IPMI and MAC addresses in your Reclass model. After you enforce all servers, the Salt Master node commissions and provisions them automatically.

- A manual provisioning enables commissioning nodes through the MAAS web UI.

Before you proceed with the physical nodes provisioning, you may want to customize the commissioning script, for example, to set custom NIC names. For details, see: Add custom commissioning scripts.

Warning

Before you proceed with the physical nodes provisioning, verify that BIOS settings enable PXE booting from NICs on each physical server.

Automatically commission and provision the physical nodes¶

This section describes how to define physical nodes in a Reclass model to automatically commission and then provision the nodes through Salt.

Automatically commission the physical nodes¶

You must define all IPMI credentials in your Reclass model to access physical servers for automated commissioning. Once you define the nodes, Salt enforces them into MAAS and starts commissioning.

To automatically commission physical nodes:

Define all physical nodes under

classes/cluster/<cluster>/infra/maas.ymlusing the following structure.For example, to define the

kvm02node:maas: region: machines: kvm02: interface: mac: 00:25:90:eb:92:4a power_parameters: power_address: kvm02.ipmi.net power_password: password power_type: ipmi power_user: ipmi_user

Note

To get MAC addresses from IPMI, you can use the ipmi tool. Usage example for Supermicro:

ipmitool -U ipmi_user-P passowrd -H kvm02.ipmi.net raw 0x30 0x21 1| tail -c 18

(Optional) Define the IP address on the first (PXE) interface. By default, it is assigned automatically and can be used as is.

For example, to define the

kvm02node:maas: region: machines: kvm02: interface: mac: 00:25:90:eb:92:4a mode: "static" ip: "2.2.3.15" subnet: "subnet1" gateway: "2.2.3.2"

(Optional) Define a custom disk layout or partitioning per server in MAAS. For more information and examples on how to define it in the model, see: Add a custom disk layout per node in the MCP model.

(Optional) Modify the commissioning process as required. For more information and examples, see: Add custom commissioning scripts.

Once you have defined all physical servers in your Reclass model, enforce the nodes:

Caution

For an offline deployment, remove the

deb-srcrepositories from commissioning before enforcing the nodes, since these repositories are not present on the reduced offlineaptimage node. To remove these repositories, you can enforce MAAS to rebuildsources.list. For example:export PROFILE="mirantis" export API_KEY=$(cat /var/lib/maas/.maas_credentials) maas login ${PROFILE} http://localhost:5240/MAAS/api/2.0/ ${API_KEY} REPO_ID=$(maas $PROFILE package-repositories read | jq '.[]| select(.name=="main_archive") | .id ') maas $PROFILE package-repository update ${REPO_ID} disabled_components=multiverse maas $PROFILE package-repository update ${REPO_ID} "disabled_pockets=backports"

The default

PROFILEvariable ismirantis. You can find your deployment-specific value for this parameter inparameters:maas:region:admin:usernameof your Reclass model.For details on building a custom list of repositories, see: MAAS GitHub project.

salt-call maas.process_machinesAll nodes are automatically commissioned.

Verify the status of servers either through the MAAS web UI or using the salt call command:

salt-call maas.machines_statusThe successfully commissioned servers appear in the

readystatus.Enforce the interfaces configuration defined in the model for servers:

salt-call state.sls maas.machines.assign_ip

To protect any static IP assignment defined, for example, in the model, configure a reserved IP range in MAAS on the management subnet.

(Optional) Enforce the disk custom configuration defined in the model for servers:

salt-call state.sls maas.machines.storage

Verify that all servers have correct NIC names and configurations.

Proceed to Provision the automatically commissioned physical nodes.

Provision the automatically commissioned physical nodes¶

Once you successfully commission your physical nodes, you can start the provisioning.

To provision the automatically commissioned physical nodes through MAAS:

Log in to the Salt Master node.

Run the following command:

salt-call maas.deploy_machinesCheck the status of the nodes:

salt-call maas.machines_status local: ---------- machines: - hostname:kvm02,system_id:anc6a4,status:Deploying summary: ---------- Deploying: 1

When all servers have been provisioned, perform the verification of the servers` automatic registration by running the salt-key command on the Salt Master node. All nodes should be registered. For example:

salt-key Accepted Keys: cfg01.bud.mirantis.net cmp001.bud.mirantis.net cmp002.bud.mirantis.net kvm02.bud.mirantis.net kvm03.bud.mirantis.net

Now, proceed to Deploy physical nodes.

Manually commission and provision the physical nodes¶

This section describes how to discover, commission, and provision the physical nodes using the MAAS web UI.

Manually discover and commission the physical nodes¶

You can discover and commission your physical nodes manually using the MAAS web UI.

To discover and commission physical nodes manually:

Power on a physical node.

In the MAAS UI, verify that the server has been discovered.

On the Nodes tab, rename the discovered host accordingly. Click Save after each renaming.

In the Settings tab, configure the Commissioning release and the Default Minimum Kernel Version to Ubuntu 16.04 TLS ‘Xenial Xerus’ and Xenial (hwe-16.04), respectively.

Note

The above step ensures that the NIC naming convention uses the predictable schemas, for example,

enp130s0f0rather thaneth0.In the Deploy area, configure the Default operating system used for deployment and Default OS release used for deployment to Ubuntu and Ubuntu 16.04 LTS ‘Xenial Xerus’, respectively.

Leave the remaining parameters as defaults.

(Optional) Modify the commissioning process as required. For more information and examples, see: Add custom commissioning scripts.

Commission the node:

- From the Take Action drop-down list, select Commission.

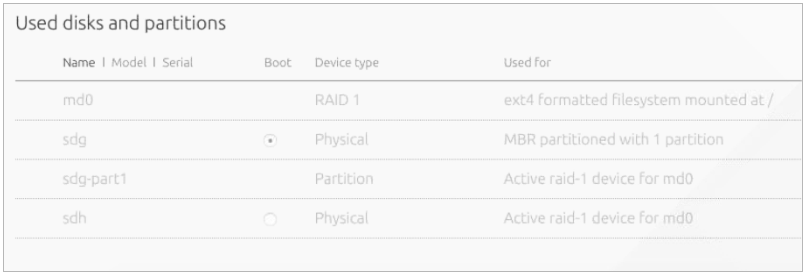

- Define a storage schema for each node.

- On the Nodes tab, click the required node link from the list.

- Scroll down to the Available disks and partitions section.

- Select two SSDs using check marks in the left column.

- Click the radio button to make one of the disks the boot target.

- Click Create RAID to create an MD

raid1volume. - In RAID type, select

RAID 1. - In File system, select

ext4. - Set

/as Mount point. - Click Create RAID.

The Used disks and partitions section should now look as follows:

Repeat the above steps for each physical node.

Proceed to Manually provision the physical nodes.

Manually provision the physical nodes¶

Start the manual provisioning of the physical nodes with the control plane

kvm02 and kvm03 physical nodes, and then proceed with the compute

cmp01 node deployment.

To manually provision the physical nodes through MAAS:

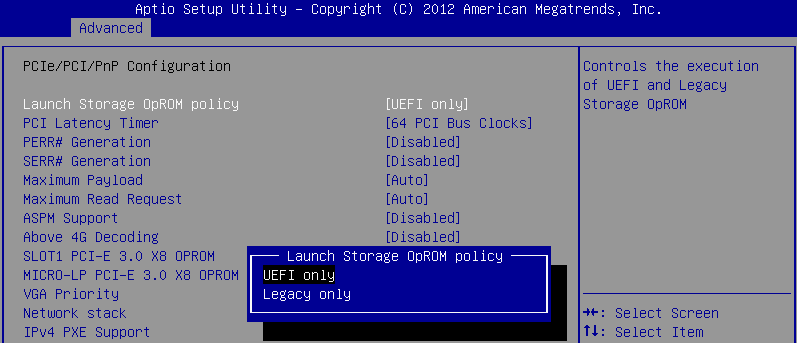

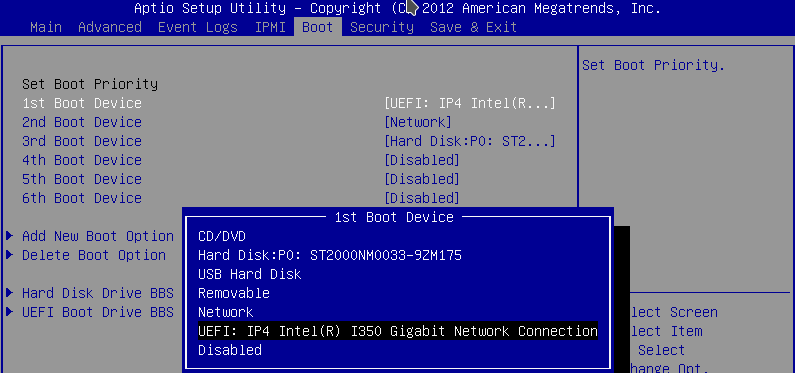

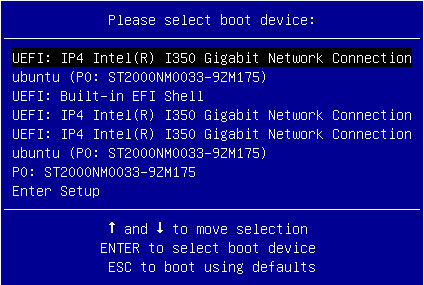

- Verify that the boot order in the physical nodes’ BIOS is set in

the following order:

- PXE

- The physical disk that was chosen as the boot target in the Maas UI.

- Log in to the MAAS web UI.

- Click on a node.

- Click the Take Action drop-down menu and select Deploy.

- In the Choose your image area, verify that

Ubuntu 16.04 LTS 'Xenial Xerus'with theXenial(hwe-16.04)kernel is selected. - Click Go to deploy the node.

- Repeat the above steps for each node.

Now, proceed to Deploy physical nodes.

See also

Deploy physical nodes¶

After you provision physical nodes as described in Provision physical nodes using MAAS, follow the instruction below to deploy physical nodes intended for an OpenStack-based MCP cluster. If you plan to deploy a Kubernetes-based MCP cluster, proceed with steps 1-2 of the Kubernetes Prerequisites procedure.

Caution

To avoid the lack of memory for the network driver and ensure its

proper operation, specify the minimum reserved kernel memory in your Reclass

model on the cluster level for a particular hardware node. For example, use

/cluster/<cluster_name>/openstack/compute/init.yml for the OpenStack

compute nodes and /cluster/<cluster_name>/infra/kvm.yml for the KVM

nodes.

linux:

system:

kernel:

sysctl:

vm.min_free_kbytes: <min_reserved_memory>

Set the vm.min_free_kbytes value to 4194304 for a node with more

than 96 GB of RAM. Otherwise, set not more than 5% of the total RAM on the

node.

Note

To change the default kernel version, perform the steps described in Manage kernel version.

To deploy physical servers:

Log in to the Salt Master node.

Verify that the

cfg01key has been added to Salt and your host FQDN is shown properly in theAccepted Keysfield in the output of the following command:salt-key

Verify that all pillars and Salt data are refreshed:

salt "*" saltutil.refresh_pillar salt "*" saltutil.sync_all

Verify that the Reclass model is configured correctly. The following command output should show top states for all nodes:

python -m reclass.cli --inventory

To verify that the rebooting of the nodes, which will be performed further, is successful, create the trigger file:

salt -C 'I@salt:control or I@nova:compute or I@neutron:gateway or I@ceph:osd' \ cmd.run "touch /run/is_rebooted"

To prepare physical nodes for VCP deployment, apply the basic Salt states for setting up network interfaces and SSH access. Nodes will be rebooted.

Warning

If you use

kvm01as a Foundation node, the execution of the commands below will also reboot the Salt Master node.Caution

All hardware nodes must be rebooted after executing the commands below. If the nodes do not reboot for a long time, execute the below commands again or reboot the nodes manually.

Verify that you have a possibility to log in to nodes through IPMI in case of emergency.

For KVM nodes:

salt --async -C 'I@salt:control' cmd.run 'salt-call state.sls \ linux.system.repo,linux.system.user,openssh,linux.network;reboot'

For compute nodes:

salt --async -C 'I@nova:compute' pkg.install bridge-utils,vlan

salt --async -C 'I@nova:compute' cmd.run 'salt-call state.sls \ linux.system.repo,linux.system.user,openssh,linux.network;reboot'

For gateway nodes, execute the following command only for the deployments with OVS setup with physical gateway nodes:

salt --async -C 'I@neutron:gateway' cmd.run 'salt-call state.sls \ linux.system.repo,linux.system.user,openssh,linux.network;reboot'

The targeted KVM, compute, and gateway nodes will stop responding after a couple of minutes. Wait until all of the nodes reboot.

Verify that the targeted nodes are up and running:

salt -C 'I@salt:control or I@nova:compute or I@neutron:gateway or I@ceph:osd' \ test.ping

Check the previously created trigger file to verify that the targeted nodes are actually rebooted:

salt -C 'I@salt:control or I@nova:compute or I@neutron:gateway' \ cmd.run 'if [ -f "/run/is_rebooted" ];then echo "Has not been rebooted!";else echo "Rebooted";fi'

All nodes should be in the

Rebootedstate.Verify that the hardware nodes have the required network configuration. For example, verify the output of the ip a command:

salt -C 'I@salt:control or I@nova:compute or I@neutron:gateway or I@ceph:osd' \ cmd.run "ip a"

Deploy VCP¶

The virtualized control plane (VCP) is hosted by KVM nodes deployed by MAAS. Depending on the cluster type, the VCP runs Kubernetes or OpenStack services, database (MySQL), message queue (RabbitMQ), Contrail, and support services, such as monitoring, log aggregation, and a time-series metric database. VMs can be added to or removed from the VCP allowing for easy scaling of your MCP cluster.

After the KVM nodes are deployed, Salt is used to configure Linux networking,

appropriate repositories, host name, and so on by running the linux Salt

state against these nodes. The libvirt packages configuration, in its turn,

is managed by running the libvirt Salt state.

Prepare KVM nodes to run the VCP nodes¶

To prepare physical nodes to run the VCP nodes:

On the Salt Master node, prepare the node operating system by running the Salt linux state:

salt-call state.sls linux -l info

Warning

Some formulas may not correctly deploy on the first run of this command. This could be due to a race condition in running the deployment of nodes and services in parallel while some services are dependent on others. Repeat the command execution. If an immediate subsequent run of the command fails again, reboot the affected physical node and re-run the command.

Prepare physical nodes operating system to run the controller node:

Proceed to Create and provision the control plane VMs.

Verify the salt-common and salt-minion versions¶

To verify the version deployed with the state:

Log in to the physical node console.

To verify the

salt-commonversion, run:apt-cache policy salt-common

To verify the

salt-minionversion, run:apt-cache policy salt-minion

The output for the commands above must show the 2017.7 version.

If you have different versions installed, proceed with

Install the correct versions of salt-common and salt-minion.

Install the correct versions of salt-common and salt-minion¶

This section describes the workaround for salt.virt to properly inject

minion.conf.

To manually install the required version of salt-common and salt-minion:

Log in to the physical node console

Change the version to

2017.7in/etc/apt/sources.list.d/salt.list:deb [arch=amd64] http://repo.saltstack.com/apt/ubuntu/16.04/amd64/2017.7/dists/ xenial main

Sync the packages index files:

apt-get updateVerify the versions:

apt-cache policy salt-common apt-cache policy salt-minion

If the wrong versions are installed, remove them:

apt-get remove salt-minion apt-get remove salt-common

Install the required versions of

salt-commonandsalt-minion:apt-get install salt-common=2017.7 apt-get install salt-minion=2017.7

Restart the

salt-minionservice to ensure connectivity with the Salt Master node:service salt-minion stop && service salt-minion start

Verify that the required version is installed:

apt-cache policy salt-common apt-cache policy salt-minion

Repeat the procedure on each physical node.

Partitioning of a VCP node¶

Starting from the Q4`18 MCP release, the VCP images contain the prebuilt

partitioning table. The main VM disk, which is vda, has the following

partitions:

vda1- 1 MB partition required for GPTvda2- 1 GB boot partitionvda3- Partition with LVM

The mountpoints selection is based on the recommendations from Center for Internet Security (CIS) and inlcude the following:

roothome/var/log/var/log/audit/tmp/var/tmp

Example of a partition table for a proxy node:

root@prx01:# lsblk /dev/vda

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

vda 252:0 0 20G 0 disk

├─vda2 252:2 0 1002M 0 part /boot

├─vda3 252:3 0 19G 0 part

│ ├─vg0-home 253:1 0 100M 0 lvm /home

│ ├─vg0-var_tmp 253:4 0 500M 0 lvm /var/tmp

│ ├─vg0-tmp 253:2 0 500M 0 lvm /tmp

│ ├─vg0-root 253:0 0 9.5G 0 lvm /

│ ├─vg0-var_log_audit 253:5 0 500M 0 lvm /var/log/audit

│ └─vg0-var_log 253:3 0 2.9G 0 lvm /var/log

└─vda1 252:1 0 1M 0 part

Specifying the VCP network/disk metadata¶

Each VCP node has the size parameter associated with it.

The size parameter is represented by the

salt:control:cluster:internal:node:<VCP_NAME>:size path in Reclass, where

<VCP_NAME> is the name of your VCP node. For example, for prx01:

root@cfg01:~# salt kvm01* pillar.items salt:control:cluster:internal:node:prx01:size --out json

{

"kvm01.<CLUSTER_NAME>.local": {

"salt:control:cluster:internal:node:prx01:size": "openstack.proxy"

}

}

The size parameter defines disk, network, RAM, and CPU metadata per a VCP

node class. For example:

root@cfg01:~# salt kvm01* pillar.items salt:control:size:openstack.control --out json

{

"kvm01.<CLUSTER_NAME>.local": {

"salt:control:size:openstack.control": {

"net_profile": "default",

"ram": 32768,

"cpu": 8,

"disk_profile": "small"

}

}

}

The disk_profile parameter is the profile that describes the disk

configuration for a VCP node. You can extend a VCP image and connect it to

a VM. For example:

root@cfg01:~# salt kvm01* pillar.items virt:disk --out json

{

"kvm01.<CLUSTER_NAME>.local": {

"virt:disk": {

"small": [

{

"system": {

"size": 8000

}

}

]

}

}

}

Passing the cloud-init data to a VCP node¶

By default, a VCP node is bootstrapped through cloud-init. You can set the

cloud-init user_data either on the cluster or node levels. The node level

configuration overrides the cloud_init data passed on the cluster level.

The user_data configuration example on the cluster level:

salt:

control:

enabled: true

virt_enabled: true

cluster:

mycluster:

domain: neco.virt.domain.com

engine: virt

# Cluster global settings

seed: cloud-init

cloud_init:

user_data:

disable_ec2_metadata: true

resize_rootfs: True

timezone: UTC

ssh_deletekeys: True

ssh_genkeytypes: ['rsa', 'dsa', 'ecdsa']

ssh_svcname: ssh

locale: en_US.UTF-8

disable_root: true

apt_preserve_sources_list: false

apt:

sources_list: ""

sources:

ubuntu.list:

source: ${linux:system:repo:ubuntu:source}

mcp_saltstack.list:

source: ${linux:system:repo:mcp_saltstack:source}

The user_data configuration example on the node level:

salt:

control:

cluster:

mycluster:

node:

ubuntu1:

provider: node01.domain.com

image: ubuntu.qcow

size: medium

cloud_init:

network_data:

networks:

- <<: *private-ipv4

ip_address: 192.168.0.161

Specifying the cloud-init data to grow an LVM-based VCP node¶

When a VM is spawned, the cloud-init growroot module extends the physical disk to consume all free space. The stages of the partition growth for a VCP node with Logical Volume Management (LVM) include:

The growth of a physical disk, which is performed by the

growrootmodule.To grow a particular physical drive and not the

/mounpoint as it is pointed to LVM, you need to pass the followingcloud_initdata to the cluster level:_param: salt_control_cluster_vcp_lvm_device: '/dev/vda3' salt: control: cluster: internal: seed: cloud-init cloud_init: user_data: growpart: mode: auto devices: - '/' - ${_param:salt_control_cluster_vcp_lvm_device} ignore_growroot_disabled: false

Note

The name of the disk can differ depending on the VCP disk driver. By default,

vdaasvirtiois used.The extension of the LVM physical volume to consume all free disk space.

Configuration example:

_param: salt_control_cluster_vcp_lvm_device: '/dev/vda3' salt: control: cluster: internal: seed: cloud-init cloud_init: user_data: runcmd: - 'if lvs vg0; then pvresize ${_param:salt_control_cluster_vcp_lvm_device}; fi' - 'if lvs vg0; then /usr/bin/growlvm.py --image-layout-file /usr/share/growlvm/image-layout.yml; fi'

The application of the partitioning layout.

The partitioning layout is stored in

salt:control:size:openstack.control:image_layout, which is a dictionary with the following schema:{"$schema": "http://json-schema.org/draft-04/schema#", "title": "Image partition layout", "type": "object", "patternProperties": { ".*": {"$ref": "#/definitions/logical_volume_layout"} }, "definitions": { "logical_volume_layout": { "type": "object", "properties": { "name": { "description": "Logical Volume Name", "type": "string" }, "size": { "description": ( "Size of Logical volume in units of logical extents. " "The number might be volume size in units of " "megabytes. A size suffix of M for megabytes, G for " "gigabytes, T for terabytes, P for petabytes or E for " "exabytes is optional. The number can also be " "expressed as a percentage of the total space in the " "Volume Group with the suffix %VG. Percentage of the " "changeble values like free space is not supported." ), }, "resizefs": { "description": ( "Resize underlying filesystem together with the " "logical volume using fsadm(8)." ), "type": "boolean" }, "vg": { "description": ("Volume group name to resize logical " "volume on."), "type": "string" } }, "additionalProperties": False, "required": ["size"] } }}

The default partitioning layout is specified in the

/srv/salt/reclass/classes/system/defaults/salt/init.ymlfile.Configuration example:

parameters: _param: salt_control_size_image_layout_default: root: size: '30%VG' home: size: '1G' var_log: size: '11%VG' var_log_audit: size: '5G' var_tmp: size: '11%VG' tmp: size: '5G' salt_control_size_image_layout_ceph_mon: ${_param:salt_control_size_image_layout_default} salt_control_size_image_layout_ceph_rgw: ${_param:salt_control_size_image_layout_default}

You can adjust the partitioning layout for a particular

sizethrough a soft type parameter. For example, you can describe the partitioning layout forceph.monas follows:parameters: _param: salt_control_size_image_layout_ceph_mon: root: size: '70%VG' home: size: '500M' var_log: size: '5%VG' var_log_audit: size: '1G' var_tmp: size: '1G' tmp: size: '1G'

Create and provision the control plane VMs¶

The control plane VMs are created on each node by running the salt state.

This state leverages the salt virt module along with some customizations

defined in a Mirantis formula called salt-formula-salt. Similarly to how

MAAS manages bare metal, the salt virt module creates VMs based on

profiles that are defined in the metadata and mounts the virtual disk to add

the appropriate parameters to the minion configuration file.

After the salt state successfully runs against a KVM node where metadata

specifies the VMs placement, these VMs will be started and automatically

added to the Salt Master node.

To create control plane VMs:

Log in to the KVM nodes that do not host the Salt Master node. The correct physical node names used in the installation described in this guide to perform the next step are

kvm02andkvm03.Warning

Otherwise, on running the command in the step below, you will delete the

cfgSalt Master.Verify whether virtual machines are not yet present:

virsh list --name --all | grep -Ev '^(mas|cfg|apt)' | xargs -n 1 virsh destroy virsh list --name --all | grep -Ev '^(mas|cfg|apt)' | xargs -n 1 virsh undefine

Log in to the Salt Master node console.

Verify that the Salt Minion nodes are synchronized by running the following command on the Salt Master node:

salt '*' saltutil.sync_all

Perform the initial Salt configuration:

salt 'kvm*' state.sls salt.minion

Set up the network interfaces and the SSH access:

salt -C 'I@salt:control' cmd.run 'salt-call state.sls \ linux.system.user,openssh,linux.network;reboot'

Warning

This will also reboot the Salt Master node because it is running on top of

kvm01.Log in back to the Salt Master node console.

Run the

libvirtstate:salt 'kvm*' state.sls libvirt

For the OpenStack-based MCP clusters, add

system.salt.control.cluster.openstack_gateway_singletoinfra/kvm.ymlto enable a gateway VM for your OpenStack environment. Skip this step for the Kubernetes-based MCP clusters.Run

salt.controlto create virtual machines. This command also insertsminion.conffiles from KVM hosts:salt 'kvm*' state.sls salt.control

Verify that all your Salt Minion nodes are registered on the Salt Master node. This may take a few minutes.

salt-key

Example of system response:

mon03.bud.mirantis.net msg01.bud.mirantis.net msg02.bud.mirantis.net msg03.bud.mirantis.net mtr01.bud.mirantis.net mtr02.bud.mirantis.net mtr03.bud.mirantis.net nal01.bud.mirantis.net nal02.bud.mirantis.net nal03.bud.mirantis.net ntw01.bud.mirantis.net ntw02.bud.mirantis.net ntw03.bud.mirantis.net prx01.bud.mirantis.net prx02.bud.mirantis.net ...

See also

Deploy CI/CD¶

The automated deployment of the MCP components is performed through CI/CD that is a part of MCP DriveTrain along with SaltStack and Reclass. CI/CD, in its turn, includes Jenkins, Gerrit, and MCP Registry components. This section explains how to deploy a CI/CD infrastructure.

To deploy CI/CD automatically:

Deploy a customer-specific CI/CD using Jenkins as part of, for example, an OpenStack cloud environment deployment:

- Log in to the Jenkins web UI available at

salt_master_management_address:8081with the following credentials:- Username:

admin - Password:

r00tme

- Username:

- Use the Deploy - OpenStack pipeline to deploy

cicdcluster nodes as described in Deploy an OpenStack environment. Start with Step 7 in case of the online deployment and with Step 8 in case of the offline deployment.

- Log in to the Jenkins web UI available at

Once the cloud environment is deployed, verify that the

cicdcluster is up and running.Disable the Jenkins service on the Salt Master node:

For the MCP versions 2018.11.0 and below:

systemctl stop jenkins systemctl disable jenkins

For the MCP versions 2019.2.0 and newer, add following pillars to

infra/config/jenkins.yml:parameters: docker: client: stack: jenkins: service: master: deploy: replicas: 0 slave01: deploy: replicas: 0

Skip the jenkins.client state on the Salt Master node by adding the following pillars to

infra/config/jenkins.yml:parameters: jenkins: client: enabled: false

Refresh pillars on the Salt Master node:

salt-call saltutil.clear_cache && salt-call saltutil.refresh_pillar

For the MCP versions 2019.2.0 and newer, update the Jenkins service configuration in Docker on the Salt Master node:

salt-call state.apply docker.client

See also

Deploy an MCP cluster using DriveTrain¶

After you have installed the MCP CI/CD infrastructure as descibed in Deploy CI/CD, you can reach the Jenkins web UI through the Jenkins master IP address. This section contains procedures explaining how to deploy OpenStack environments and Kubernetes clusters using CI/CD pipelines.

Note

For production environments, CI/CD should be deployed on a per-customer basis.

For testing purposes, you can use the central Jenkins lab that is available for Mirantis employees only. To be able to configure and execute Jenkins pipelines using the lab, you need to log in to the Jenkins web UI with your Launchpad credentials.

Deploy an OpenStack environment¶

This section explains how to configure and launch the OpenStack environment deployment pipeline. This job is run by Jenkins through the Salt API on the functioning Salt Master node and deployed hardware servers to set up your MCP OpenStack environment.

Run this Jenkins pipeline after you configure the basic infrastructure

as described in Deploy MCP DriveTrain.

Also, verify that you have successfully applied the linux and salt

states to all physical and virtual nodes for them not to be disconnected

during network and Salt Minion setup.

Note

For production environments, CI/CD should be deployed on a per-customer basis.

For testing purposes, you can use the central Jenkins lab that is available for Mirantis employees only. To be able to configure and execute Jenkins pipelines using the lab, you need to log in to the Jenkins web UI with your Launchpad credentials.

To automatically deploy an OpenStack environment:

Log in to the Salt Master node.

For the OpenContrail setup, add the version-specific parameters to the

<cluster_name>/opencontrail/init.ymlfile of your Reclass model. For example:parameters: _param: opencontrail_version: 4.1 linux_repo_contrail_component: oc41

Set up network interfaces and the SSH access on all compute nodes:

salt -C 'I@nova:compute' cmd.run 'salt-call state.sls \ linux.system.user,openssh,linux.network;reboot'

If you run OVS, run the same command on physical gateway nodes as well:

salt -C 'I@neutron:gateway' cmd.run 'salt-call state.sls \ linux.system.user,openssh,linux.network;reboot'

Verify that all nodes are ready for deployment:

salt '*' state.sls linux,ntp,openssh,salt.minion

Caution

If any of these states fails, fix the issue provided in the output and re-apply the state before you proceed to the next step. Otherwise, the Jenkins pipeline will fail.

In a web browser, open

http://<ip address>:8081to access the Jenkins web UI.Note

The IP address is defined in the

classes/cluster/<cluster_name>/cicd/init.ymlfile of the Reclass model under thecicd_control_addressparameter variable.Log in to the Jenkins web UI as admin.

Note

To obtain the password for the admin user, run the

salt "cid*" pillar.data _param:jenkins_admin_passwordcommand from the Salt Master node.In the global view, verify that the git-mirror-downstream-mk-pipelines and git-mirror-downstream-pipeline-library pipelines have successfully mirrored all content.

Find the Deploy - OpenStack job in the global view.

Select the Build with Parameters option from the drop-down menu of the Deploy - OpenStack job.

Specify the following parameters:

Deploy - OpenStack environment parameters¶ Parameter Description and values ASK_ON_ERROR If checked, Jenkins will ask either to stop a pipeline or continue execution in case of Salt state fails on any task STACK_INSTALL Specifies the components you need to install. The available values include:

corekvmcicdopenstackovsorcontraildepending on the network plugin.cephstacklightossNote

For the details regarding StackLight LMA (

stacklight) with the DevOps Portal (oss) deployment, see Deploy StackLight LMA with the DevOps Portal.

BATCH_SIZE Added since 2019.2.6 update The batch size for Salt commands targeted for a large amount of nodes. Disabled by default. Set to an absolute number of nodes (integer) or percentage, for example, 20 or 20%. For details, see Configure Salt Master threads and batching. DIST_UPGRADE_NODES Added since 2019.2.8 update Select to run apt-get dist-upgradeon all cluster nodes before deployment. Disabled by default.SALT_MASTER_CREDENTIALS Specifies credentials to Salt API stored in Jenkins, included by default. See View credentials details used in Jenkins pipelines for details. SALT_MASTER_URL Specifies the reachable IP address of the Salt Master node and port on which Salt API listens. For example,

http://172.18.170.28:6969To find out on which port Salt API listens:

- Log in to the Salt Master node.

- Search for the port in the

/etc/salt/master.d/_api.conffile. - Verify that the Salt Master node is listening on that port:

netstat -tunelp | grep <PORT>

STACK_TYPE Specifies the environment type. Use physicalfor a bare metal deploymentClick Build.

Once done, configure the Salt Master node password expiration as described in Modify Salt Master password expiration.

Deploy a multi-site OpenStack environment¶

MCP DriveTrain enables you to deploy several OpenStack environments at the same time.

Note

For production environments, CI/CD should be deployed on a per-customer basis.

For testing purposes, you can use the central Jenkins lab that is available for Mirantis employees only. To be able to configure and execute Jenkins pipelines using the lab, you need to log in to the Jenkins web UI with your Launchpad credentials.

To deploy a multi-site OpenStack environment, repeat the

Deploy an OpenStack environment procedure as many times as you need specifying

different values for the SALT_MASTER_URL parameter.

See also

Deploy a Kubernetes cluster¶

Caution

Kubernetes support termination notice

Starting with the MCP 2019.2.5 update, the Kubernetes component is no longer supported as a part of the MCP product. This implies that Kubernetes is not tested and not shipped as an MCP component. Although the Kubernetes Salt formula is available in the community driven SaltStack formulas ecosystem, Mirantis takes no responsibility for its maintenance.

Customers looking for a Kubernetes distribution and Kubernetes lifecycle management tools are encouraged to evaluate the Mirantis Kubernetes-as-a-Service (KaaS) and Docker Enterprise products.

The MCP Containers as a Service architecture enables you to easily deploy a Kubernetes cluster on bare metal with Calico plugin set for Kubernetes networking.

Caution

OpenContrail 4.x for Kubernetes 1.12 or later is not supported.

This section explains how to configure and launch the Kubernetes cluster deployment pipeline using DriveTrain.

You can enable an external Ceph RBD storage in your Kubernetes cluster as required. For new deployments, enable the corresponding parameters while creating your deployment metadata model as described in Create a deployment metadata model. For existing deployments, follow the Enable an external Ceph RBD storage procedure.

You can also deploy ExternalDNS to set up a DNS management server in order to control DNS records dynamically through Kubernetes resources and make Kubernetes resources discoverable through public DNS servers.

If you have a predeployed OpenStack environment, you can deploy a Kubernetes cluster on top of OpenStack and enable the OpenStack cloud provider functionality. It allows you to leverage Cinder volumes and Neutron LBaaS (Octavia) that enhance the Kubernetes cluster functionality.

Added in 2019.2.3 If you want to enable Helm for managing Kubernetes charts, configure your deployment model as described in Enable Helm support. Once configured, Helm will be deployed on the Kubernetes cluster using the corresponding DriveTrain pipeline.

Depending on your cluster configuration, proceed with one of the sections listed below.

Note

For production environments, CI/CD should be deployed on a per-customer basis.

For testing purposes, you can use the central Jenkins lab that is available for Mirantis employees only. To be able to configure and execute Jenkins pipelines using the lab, you need to log in to the Jenkins web UI with your Launchpad credentials.

Prerequisites¶

Before you proceed with an automated deployment of a Kubernetes cluster, follow the steps below:

- If you have swap enabled on the

ctlandcmpnodes, modify your Kubernetes deployment model as described in Add swap configuration to a Kubernetes deployment model. - Deploy DriveTrain as described in Deploy MCP DriveTrain.

Now, proceed to deploying Kubernetes as described in Deploy a Kubernetes cluster on bare metal.

Deploy a Kubernetes cluster on bare metal¶

This section provides the steps to deploy a Kubernetes cluster on bare metal nodes configured using MAAS with Calico as a Kubernetes networking plugin.

Caution

OpenContrail 4.x for Kubernetes 1.12 or later is not supported.

To automatically deploy a Kubernetes cluster on bare metal nodes:

Verify that you have completed the steps described in Prerequisites.

Log in to the Jenkins web UI as Administrator.

Note

To obtain the password for the admin user, run the

salt "cid*" pillar.data _param:jenkins_admin_passwordcommand from the Salt Master node.Find the k8s_ha_calico heat pipeline job in the global view.

Select the Build with Parameters option from the drop-down menu of the selected job.

Configure the deployment by setting the following parameters as required:

Deployment parameters¶ Parameter Defualt value Description ASK_ON_ERROR False If True, Jenkins will stop on any failure and ask either you want to cancel the pipeline or proceed with the execution ignoring the error.SALT_MASTER_CREDENTIALS <YOUR_SALT_MASTER_CREDENTIALS_ID> The Jenkins ID of credentials for logging in to the Salt API. For example, salt-credentials. See View credentials details used in Jenkins pipelines for details. SALT_MASTER_URL <YOUR_SALT_MASTER_URL> The URL to access the Salt Master node. STACK_INSTALL Select core,k8s,calicoComponents to install. STACK_TEST Empty The names of the cluster components to test. By default, nothing is tested. STACK_TYPE physicalThe type of the cluster. Click Build to launch the pipeline.

Click Full stage view to track the deployment process.

The following table contains the stages details for the deployment with Calico as a Kubernetes networking plugin:

The deploy pipeline workflow¶ # Title Details 1 Create infrastructure Creates a base infrastructure using MAAS. 2 Install core infrastructure - Prepares and validates the Salt Master node and Salt Minion nodes. For example, refreshes pillars and synchronizes custom modules.

- Applies the linux,openssh,salt.minion,ntp states to all nodes.

3 Install Kubernetes infrastructure - Reads the control plane load-balancer address and applies it to the model.

- Generates the Kubernetes certificates.

- Installs the Kubernetes support packages that include Keepalived, HAProxy, containerd, and etcd.

4 Install the Kubernetes control plane and networking plugins - Installs Calico.

- Sets up etcd.

- Installs the control plane nodes.

When the pipeline has successfully executed, log in to any Kubernetes

ctlnode and verify that all nodes have been registered successfully:kubectl get nodes

See also

Deploy ExternalDNS for Kubernetes¶

ExternalDNS deployed on Mirantis Cloud Platform (MCP) allows you to set up a DNS management server for Kubernetes starting with version 1.7. ExternalDNS enables you to control DNS records dynamically through Kubernetes resources and make Kubernetes resources discoverable through public DNS servers. ExternalDNS synchronizes exposed Kubernetes Services and Ingresses with DNS cloud providers, such as Designate, AWS Route 53, Google CloudDNS, and CoreDNS.

ExternalDNS retrieves a list of resources from the Kubernetes API to determine the desired list of DNS records. It synchronizes the DNS service according to the current Kubernetes status.

ExternalDNS can use the following DNS backend providers:

- AWS Route 53 is a highly available and scalable cloud DNS web service. Amazon Route 53 is fully compliant with IPv6.

- Google CloudDNS is a highly available, scalable, cost-effective, and programmable DNS service running on the same infrastructure as Google.

- OpenStack Designate can use different DNS servers including Bind9 and PowerDNS that are supported by MCP.

- CoreDNS is the next generation of SkyDNS that can use etcd to accept updates to DNS entries. It functions as an on-premises open-source alternative to cloud DNS services (DNSaaS). You can deploy CoreDNS with ExternalDNS if you do not have an active DNS backend provider yet.

This section describes how to configure and set up ExternalDNS on a new or existing MCP Kubernetes-based cluster.

Prepare a DNS backend for ExternalDNS¶