High availability in OpenStack

High availability in OpenStack¶

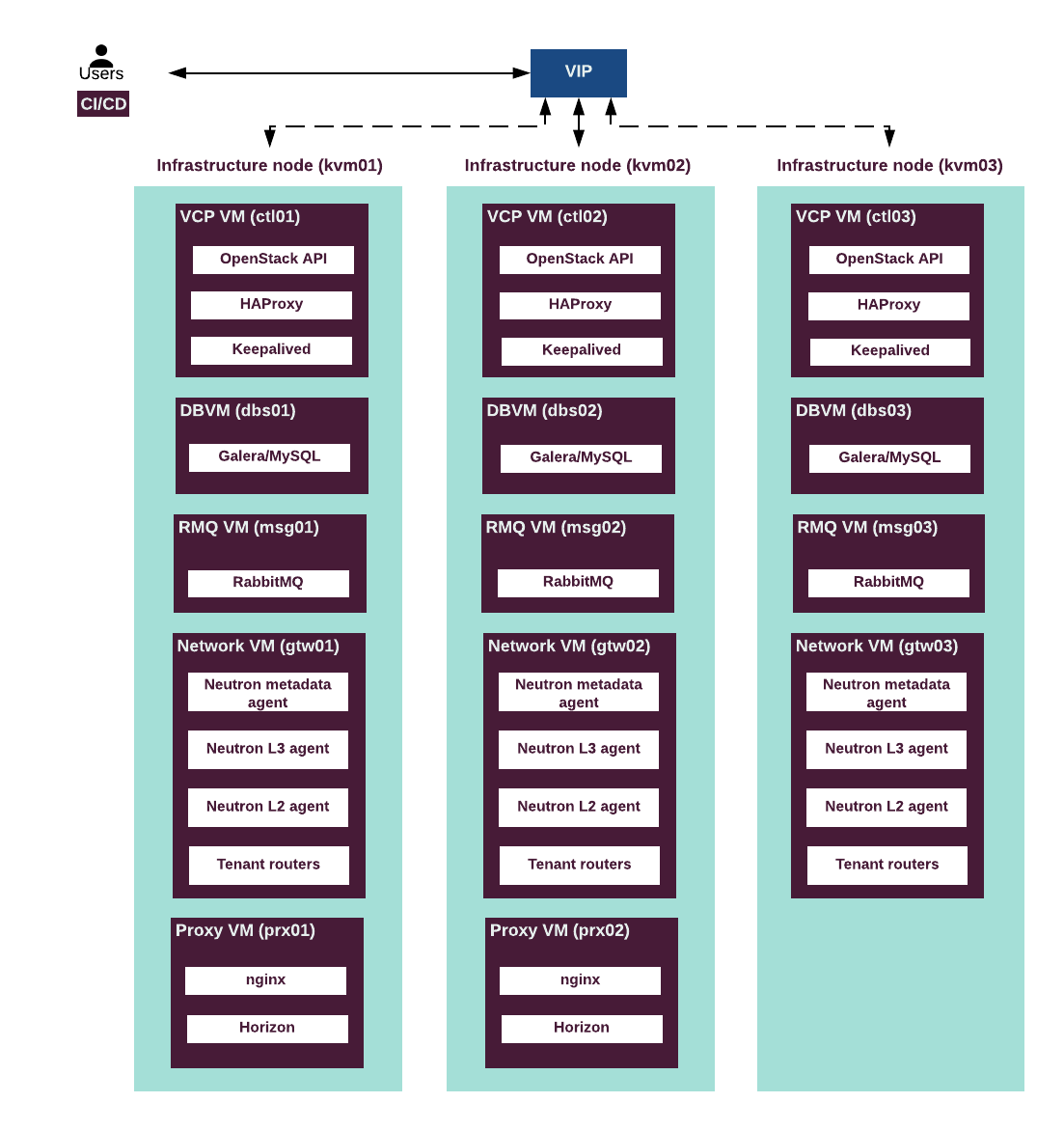

The Virtual Control Plane (VCP) services in the MCP OpenStack are highly available and work in active/active or active/standby modes to enable service continuity after a single node failure. An OpenStack environment contains both stateless and stateful services. Therefore, the MCP OpenStack handles them in a different way to achieve high availability (HA).

- OpenStack microservices

To make the OpenStack stateless services, such as

nova-api,nova-conductor,glance-api,keystone-api,neutron-api, andnova-schedulersustainable against a single node failure, HAProxy load balancer (two or more instances) is used for failover. MCP runs multiple instances of each service distributed between physical machines to separate the failure domains.

- API availability

MCP OpenStack ensures HA for the stateless API microservices in an active/active configuration using the HAProxy with Keepalived. HAProxy provides access to the OpenStack API endpoint by redirecting the requests to active instances of an OpenStack service in a round-robin fashion. It sends API traffic to the available backends and prevents the traffic from going to the unavailable nodes. Keepalived daemon provides VIP failover for the HAProxy server.

Note

Optionally, you can manually configure SSL termination on the HAProxy, so that the traffic to OpenStack services is mirrored to go for inspection in a security domain.

- Database availability

In MCP OpenStack, MySQL database server runs in cluster with synchronous data replication between the instances of MySQL server. The cluster is managed by Galera. Galera creates and runs MySQL cluster of three instances of the database server. All servers in the cluster are active. HAProxy redirects all writing requests to just one server at any time and handles failovers.

- Message bus availability

RabbitMQ server provides messaging bus for OpenStack services. In MCP reference configuration, RabbitMQ is configured with

ha-modepolicy to run a cluster in active/active mode. Notification queues are mirrored across the cluster. Messaging queues are not mirrored by default, but are accessible from any node in the cluster.

- OpenStack dashboard

Two instances of proxy node are deployed. They run OpenStack dashboard (Horizon), and Nginx based reverse proxy that exposes OpenStack API endpoints.

The following diagram describes the control flow in an HA OpenStack environment: